This morning I poured myself a thermos of coffee and left for lab, abandoning it on the kitchen counter. I nearly forgot about the paper I had to review this week until I saw the deadline looming on my desk calendar. And I didn’t remember my friend’s birthday until logging into Facebook—and I’m always the one people rely on to remember birthdays.

I sure could use a little memory boost. Unfortunately, despite the growing popularity of brain-training apps and programs like Lumosity, CogniFit, CogMed, and Jungle Memory, I’m not going to find any help here.

They're totally bogus, you see.

Lumosity co-founder Michael Scanlon means well, though. He started up the company in 2005 with Kunal Sarkar and David Drescher, after dropping out of his neuroscience Ph.D. at Stanford. Since then, the company has reached more than 35 million people and this time last year the company’s mobile app was being downloaded nearly 50,000 times a day.

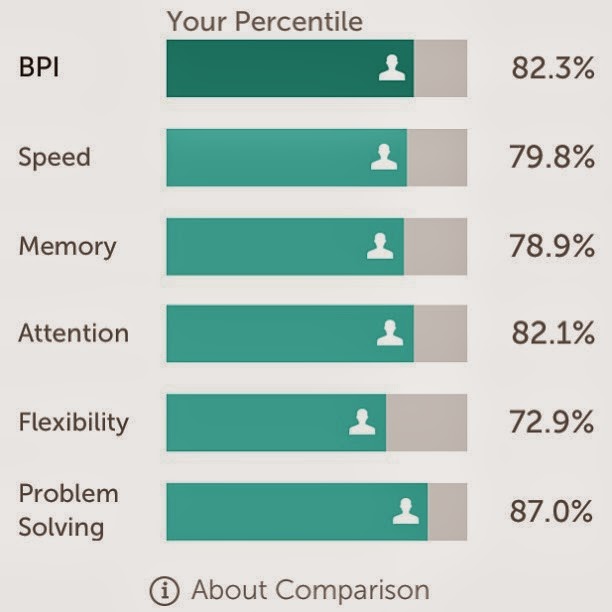

“Lumosity is based on the science of neuroplasticity,” the commercials tout, and Lumosity’s website advertises its ability to “train memory and attention” through a “personalized training program.” This plan includes more than 40 games designed to boost memory, flexibility, attention, processing speed, and general problem-solving ability.

Lumosity has even put out a fancy PDF describing the science behind their games and changes in individuals’ BPT (brain performance test) scores before and after training.

A year after Lumosity’s official launch in 2007, Susanne Jaeggi and colleagues at Columbia University published a study suggesting that memory training not only enhanced short-term memory ability, but actually boosted one’s IQ an entire point per hour of training. Wow!

But Thomas Redick and colleagues at Georgia Tech thought it sounded too good to be true. With a skeptical eye, they attempted to replicate Jaeggi’s findings. This time, unlike Jaeggi’s study, they tested 17 different cognitive tasks, including tasks for fluid intelligence, multitasking, working memory, and perceptual speed. They also had two control groups: one that underwent placebo training, and one that did no testing whatsoever.

After 20 sessions, Redick found that while participants improved performance on the tasks at hand, their newfound abilities never actually transferred to any global measure of intelligence or cognition. Their study was published last May.

Another investigation, published in December by a group at Case Western Reserve University, employed a similar placebo-controlled design. Focusing on working memory and abstract problem-solving, they found that even training for up to 20 days resulted in no significant improvement in mental capacity. Again, though, the researchers did note that performance on the specific tasks improved.

When Adrian Owen and colleagues of Cambridge University reported similar results after a six-week online cognitive training regime using 11,400 participants, he attributed these task improvements to familiarity—not a true change in cognitive ability.

And a recent meta-analysis of 23 studies confirmed these and others’ findings. Monica Melby-Lervåg and Charles Hulme of University of Oslo concluded that brain-training programs did indeed produce short-term, highly specific improvements in the task at hand, but with no generalized improvements to overall intelligence, memory, attention, or other cognitive ability.

In other words, according to these studies, it seems that remembering which shape came before the circle in the sequence will not help you remember that one last item on your grocery list as you’re out shopping. And it certainly won’t raise your IQ by any significant amount.

In this age of tablets and mobile devices, it’s unfortunate that something so readily available cannot help us exercise our minds in ways that may benefit us beyond the screen.

And these revelations may be especially bad news for many who rely on apps like Lumosity everyday—the elderly attempting to ward off dementia, for example. Or those suffering from brain trauma and individuals with learning disabilities.

The takeaway message from these studies?

If you enjoy the games, by all means continue. But don’t necessarily believe the hype nor continue wasting your money if you’re using these apps to truly improve your memory, reaction time, or intelligence in the longer term.

If the idea of using mental exercise to stave off the effects of age on memory and other functions still appeals, then continue to expose yourself to a variety of problem-solving skills throughout the day—and not necessarily on the computer.

Or, if you’re anything like me, just try to remember where you actually placed your morning coffee before you leave the house.

The shot of caffeine probably does more for my workday brainpower than any brain-training app will.

--

Originally published at The Conversation UK.

Image credit: Francisco Martins (Flickr), Gord Fynes (Flickr), Aaron Gouveia

Chooi, Weng-Tink (2012). Working memory training does not improve intelligence in healthy young adults. Intelligence, 40 (6) DOI: 10.1016/j.intell.2012.07.004

Jaeggi, S.M., M. Buschkuehl, J. Jonides, and W.J. Perrig. 2008. Improving fluid intelligence with training on working memory. Proc Nat Acad Sci DOI: 10.1073/pnas.0801268105.

Melby-Lervåg, M. and C. Hulme. 2013. Is working memory

training effective? A meta-analytic review. Dev

Psychol 49(2): 270-291.

Owen, A.M., A. Hampshire, J.A. Grahn, R. Stenton, S. Dajani,

A.S. Burns, R.J. Howard, and C.G. Ballard. 2010. Putting brain training to the

test. Nature 465: 775-778.

Redick, T.S., Z. Shipstead, T.L. Harrison, K.L. Hicks, D.Z. Hambrick, M.J. Kane, and R.W. Engle. 2013. No evidence of intelligence improvement after working memory training: A randomized, placebo-controlled study. J Exp Psychol Gen 142(2): 359-379.

Redick, T.S., Z. Shipstead, T.L. Harrison, K.L. Hicks, D.Z. Hambrick, M.J. Kane, and R.W. Engle. 2013. No evidence of intelligence improvement after working memory training: A randomized, placebo-controlled study. J Exp Psychol Gen 142(2): 359-379.

Love this! Feeling rather remiss I didn't include you in my top bloggers list ... but a new discovery is better than no discovery!

ReplyDeleteAs a Phd student, you'll certainly have an upper hand at interpreting reserach, so allow me to ask a question that comes to mind from the Redick study. Why do they conclude failure to prove is proof of failure? In my experience, the vast majority of studies like theirs fail to control for, amongst many things, affective processes.

ReplyDeleteBob Sternberg thought enough of the Jaeggi study to comment favorably in 2008. He raised half a dozen questions at the time for future exploration, all of which I believe continue to be legitimate topics for ongoing research.

I also wonder about the construct validity of the metas by Hulme and others. Like Redick, the majority of the underlying studies were based on interventions of +/- 20 sessions. I think most of us recognize the level of fluency we attained our first semester of high school French. To then judge a language teacher by my inability to 'transfer' and order a meal in Paris probably strikes most of us as a bit unfair.

To continue the analogy, I would point out that the State Department employs a multimodal approach in maximixzing adult brains' growth in new-language fluency - audio, visual and perhaps most importantly (re affective processes), human.

To expect a computer on its own to deliver Gf growth seems on the face to be naive. Bungelabs at Berkeley combined computers with human engagement, and appeared to spark quite remarkable changes in 10 year olds.

Their framework strikes me as the most promising.

Your thoughts?

Hi Tom, thanks for your comment and for reading the post!

DeleteThe problem with studies like Jaeggi's is that you end up testing "intelligence" through puzzle-like tasks right after you've trained yourself to become better at other puzzle-like tasks. She has recognized this as a weakness and has suggested that future studies examine how training games can translate into school or work performance. Fluid ability (as Sternberg agrees) definitely shows promise in terms of being "trainable." The goal is to optimize what kind of training will translate to the best real-life applications.

Your comment on construct validity is interesting. The multimodal approach used by the State Department is exactly what allows it to thrive. I wouldn't expect myself to be able to order a meal in Paris just because I've memorized a list of 50 French nouns and gotten an A on my exam, much like I wouldn't expect that playing memory games on my smartphone will suddenly mean I no longer need to bring my grocery list to the store with me.

The work by the Bunge lab shows exactly the kind of promise that a phone app will probably never be able to deliver!